I work as an independent consultant advising organizations on how to improve their software development practice. This is a very broad theme. It touches on every aspect of software development from design, coding and testing, to organizational structure, leadership and culture.

My work is structured, perhaps unsurprisingly, around Continuous Delivery (CD). I believe that CD is important for a variety of reasons. It is an approach grounded in the ideas of “Lean Thinking”, it is based on the application of the Scientific Method to software development. It is driven through a rapid, high-quality, iterative, feedback-guided approach to everything that we do, giving us deeper insight into our work, our products and our customers.

All of this is powerful in its impact, but there is another dimension that matters a lot in certain industry sectors.

The majority of my clients work in regulated industries, Finance, Health-care, Gambling, Telecoms, Transport of different kinds and others.

My own background, as a developer and technical leader, was, in the later part of my career, in Finance – Exchanges and Trading Systems. Also highly regulated.

Nevertheless, when describing the Continuous Delivery approach to people I am regularly asked, “Yes, that all sounds very good, but it can’t possibly work in a regulated environment can it?”.

I have come to the opposite conclusion. I believe that CD is an essential component of ANY regulated approach. That is, I believe that it is not possible to implement a genuinely compliant, regulated system in the absence of CD!

Now, that is a strong statement, so what am I talking about?

What are the goals of Regulatory Compliance?

All of the regulatory regimes that I have seen are, in essence, focussed on two things:

1) Trying to encourage a professional, high-quality, safe approach to making change.

2) Providing an audit-trail to allow for some degree of oversight and problem-finding after a failure.

There is a third thing, but it is really secondary compared to these two. The third thing is that we need to be able to demonstrate the safety, quality and professionalism and our ability to work in a traceable (audited) way to regulators and auditors.

How does CD Help?

I believe that the highest quality approach that we know of for creating software of any kind is a disciplined approach to CD. The evidence is on my side https://amzn.to/2P2aHjv

So if our regulators require a professional, high-quality, safe approach to making change, the evidence says that they should be demanding CD (and structuring their regulations to encourage it!).

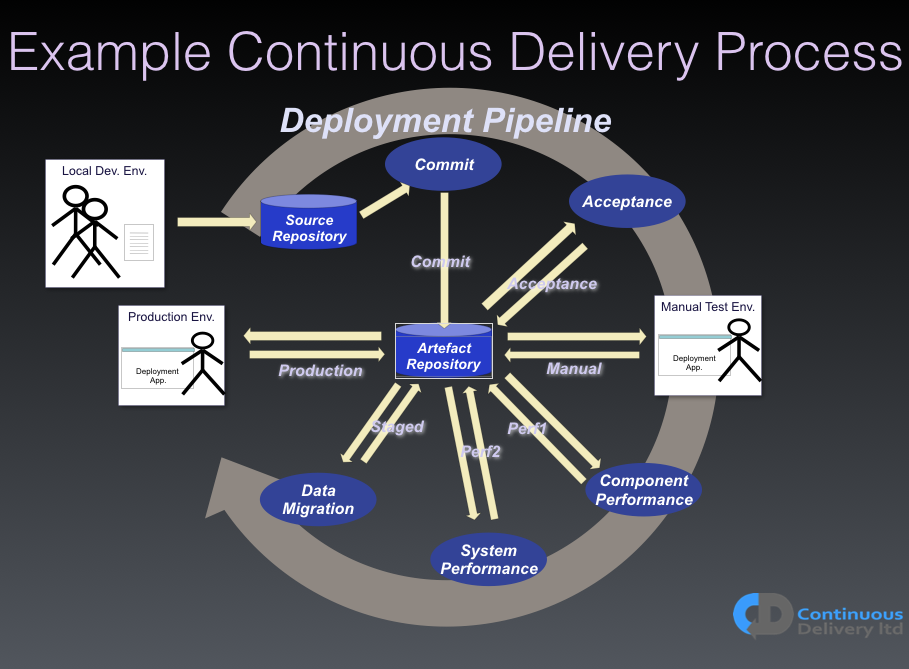

One of the core ideas in CD is the concept of the “Deployment Pipeline”, a channel through which all change destined for production flows. A Deployment Pipeline is used to validate, and reject changes that don’t look good enough. It is a platform, an organizing concept, and a falsification mechanism, for production-destined change. It is also the perfect vehicle for compliance.

All production change flows through the Deployment Pipeline. This means that, almost as a side-effect, we have access to all of the information associated with any change. That means that we can create a definitive, authoritative audit-trail.

(See links at end for more info on Deployment Pipelines & CD in general)

Figure 1 shows a diagram of an example Deployment Pipeline. Remember, there is no other route to production for any change.

If we tie together our requirement-management systems with our Version Control System (VCS), through something as simple as a commit message tagged with the story, or bug, ID that this commit is associated with, then we have complete traceability. We can tell the story of any change from end-to-end.

We can answer any of the following questions (and many more):

- “Who captured the need for this change?”

- “Who wrote the tests?”

- “Who committed changes associated with this piece of work?”

- “Which tests were run?”

- “This change was rejected, what failed to reject the change?”

- “Who was involved with any manual testing?”

- “Who approved the release into production?”

- “Which version of the OS, Database, programming language, etc was deployed and used?”

- “Which version of the deployment script/tooling was used?”

- …

All of this information is available as a side-effect of building a Deployment Pipeline. In fact it is quite hard to imagine a Pipeline that doesn’t give you access to this information. I sometimes describe one of the important properties of Deployment Pipelines as “providing a key’ed, search-space for all of the information associated with any production change.”. This is Gold for people working in compliance, regulation and audit.

The Deployment Pipeline, when properly implemented, is in the perfect place to act as a platform for regulatory functions and data-collection. If we can mine this Gold, if we can identify the needs of the people working in these areas, we can implement behaviors, in the Pipeline, to support and enhance their efforts.

Here are a few examples from my own, real-world, experience…

- Generate an automatic audit-trail for all production change.

- Implement access-control to the Pipeline so that we can audit who did what.

- Implement “compliance-gates” to automate rules

e.g. “We require sign-off for release”:

Solution: Use access-control credentials (and people’s roles) to automate “sign-offs” - Reject any commit that fails any test.

(Most regulators *love* this idea when you explain it to them!) - In an emergency we may need to break a rule

Solution: Allow manual override of rules

e.g. “Reject any commit that fails a test”, but audit the decision and who made it.

(Regulators love that too. They recognize that bad things sometimes happen, but want to see the decision-making)

What Does It Take?

Assuming that you have a working Deployment Pipeline, creating one is outside the scope of this article (see links below), the first practical step to implement “Continuous Compliance” is the one I have already mentioned. Connect your Pipeline, via commit messages, to your requirements system!

Use the IDs from Jira or Trello (or whatever) and tag every commit with a Bug or Story ID.

That should give you a key-based system that joins all of the information that you collect together and makes it searchable (and so amenable to automation, reporting, and tool-building).

The next step is to add access-control to Pipeline tools so that you can track human decision-making.

Continuous Delivery is defined as “working so that your software is always in a releasable state”. This does not eliminate the need for human decision-making. Where applicable and appropriate, capture the outcome of human decisions via the Pipeline tools.

The “Lean” part of CD means that we are trying reduce the work associated with the process to a minimum. We want to eliminate “waste” wherever we find it, and so we need to be smart about the things that we do and maximize their value.

For example, regulation often says that we need to document changes to our production systems. I agree! However, I don’t want to waste my, and my teams’, time writing documents that will only ever be read by regulators. Instead I would like to find things that I must do to create useful software and do them in a way that allows me to use them for other purposes, like informing regulators. One way to think about this is we are trying to achieve regulatory compliance as a side-effect of how we work.

In order to design and develop software I must have some idea of what I am trying to achieve (a requirement of some kind), I must work to fulfil that need (write code of some kind) and I must check that the need is fulfilled (a test of some kind).

What if I could do only these things, but do them in a way that allowed me to use the information that I generate for more than only these things?

If my requirements were defined in a way that documented changes to the behavior of the system and why they are useful (sounds a bit like “User Stories” doesn’t it?). If I adopted some simple conventions in the way that I captured and organized this information, to aid automation, then I have descriptions of changes that would contribute to, and make sense as, release notes. So I will be able to automate some of the documentation associated with a release, in a regulated environment.

If I drive these requirements from examples of desirable behaviors of my system, they define these desirable behaviors in a way that allows me to automate the examples and use them as specifications for the behavior of the system – Executable Specifications. These automated specifications (aka “Acceptance Tests”) can be used to structure the development activities. At the start of each new piece of work we begin by creating our “Executable Specifications” then we practice TDD, in fine-grain form, to incrementally evolve a solution to the meet these specifications.

These activities, combined, give us an extremely high-quality approach to developing solutions. If we record them they also provide us the “whys”, “whens” and “whos” that allow us to tell the story of the work done.

We can “grow” the system via many small, low-risk, audited, commits. Each change is traceable, audited and of very high quality. Each change is small and simple, verified by Continuous Integration, and so safer.

We can make a separate decision of when to release each, tiny, change into production and we will have an automated audit-trail of all of the actions and decisions that contributed to that release.

This approach is demonstrably, measurably, higher-quality and safer than any other that we know of.

All changes, whatever their nature, are treated in the same way. There are no special cases for bug-fixes or emergency fixes. No special “back-doors” to production. All production change flows through the same process and mechanism and so is traceable and verified to the level of testing that we decide to apply in our Pipeline.

How else could we minimize work?

Regulated industries often require various gate-keeping steps, sign-offs for example. Unfortunately the evidence is against these as a successful approach to improving quality and safety. In fact, the more complex approaches to gatekeeping, like “Change Approval Boards” are negatively correlated with software quality! The more complex the compliance mechanisms around change, the lower the quality of the software. (See Page 49 of the 2019 “State of DevOps Report”).

Nevertheless, most regulatory frameworks were designed before this kind of analysis was available. Most regulatory frameworks were built on an assumption of a Waterfall style, gated process. So if we want to achieve “Continuous Compliance” in a real-world environment, we must cope with regulation that is not quite the right shape for this very different paradigm. That is OK, because this new paradigm is much more flexible than the old.

Over time I hope, and expect, regulation to adapt, to catch-up to these more effective ways of working. It is, after all, a better way to fulfil the underlying intent of any regulatory mechanism for software.

I believe that there have been some small moves, at the level of interpretation of regulation, in some industries. Over time I expect that the regulations themselves will change to assume, or encourage, CD, rather than only allow interpretations that permit it.

I have had success with regulators, and people working in compliance organizations, in several different industries by engaging with them and demonstrating that what I am trying to achieve is in their interest. By bringing them on-board with the change, and helping to solve the real problems that people in these roles regularly face, you can not just get approval to interpret the regulations in ways more amenable to CD, but you can gain allies who will work to help you.

Here are a few techniques that I have used and advised my clients to adopt:

Example: When you are being audited, assign developers to help the auditors. Their job is to help, to give the auditors all of the information that they need, but also to observe what is going on and to treat the audit as a chance to learn what the auditors really need. This is a requirements-gathering opportunity! Then take what you have learned and implement new checks, in your Pipeline to stop errors sneaking through. Improve the audit-trail so that a future auditor can more easily see what happened. Create new reports on your Pipeline-search-space to tell the story in a way that meets the needs of the auditor.

Example: If your regulatory framework requires a code-review, how do you do that best and keep-up the pace of work that makes CI (and CD) work best? I my experience Pair-Programming, coupled with regular pair-rotation, gives all of the benefits of code-review, and more, and is acceptable to regulators to demonstrate that the code has been reviewed and that there is some independent oversight/verification of change.

Example: Your regulatory framework requires sign-off from a developer, operations person and tester before release. Use the access-control tools in your Pipeline to enforce this policy, and audit it.

Example: Regulation requires a separation of roles. Devs can’t access production, Ops can’t access Dev environments. Fine, I prefer to take it a step further. “No-one can access production!”. All production access is through automation, e.g. Infrastructure as Code, automated deployment, automated monitoring etc.

These are a few techniques that I have seen applied, and applied myself, in regulated environments. My experience, across the board, has been that regulators prefer these approaches, once they come to understand them, because they provide a better quality experience all around.

What Is Not Covered by CD?

Some regulatory regimes require significant documentation describing the architecture and design of the system as well as describing any “significant change” to its design.

I believe that these are another hang-over from Waterfall thinking. I think that the intent is that by asking for such documentation regulators are attempting to encourage people to think more carefully about change and to approach it with more caution.

I believe that a sophisticated approach to test-automation is a better approach. Nevertheless, current regulation usually requires documentation of the system architecture and significant changes to it.

I tend to approach this part of the problem in more conventional ways. Write the architecture documents as you always have, except try to ensure that the detail is not too precise. What you need to achieve is an accurate, but slightly vague description of your system. For example, describe the principles services, perhaps how they communicate. The main information flows and stores, but don’t go into the detail of implementation, code or schemas. Leave room for the system to evolve over time, but still meet the architectural description.

Try to agree, with your regulators, what “significant change” entails, what are they nervous of? They probably won’t tell you. Or at least they won’t be very definite. It is not their job. However, what you are looking for is how to ensure that the massive flood of changes that you want to apply (in a CD context) don’t count as significant.

Even these tiny, frequent changes will be audited, documented (by tests), reviewed (by pair-programming) and have things like (autogenerated) release-notes associated with them, but they won’t count as “significant” in the sense of requiring new documentation (beyond the automated tests and requirements).

Again, I hope, and expect, that regulation will change over time, to allow for these more effective forms of documentation to be used instead of Prose doing a poor job of describing some kind of design intent.

I am not against documentation that is useful in helping people to understand systems. I like to create and maintain a high-level description of the system architecture that aids people in navigating their way around the system. I am just not sure how this helps the goals of regulation and I don’t want to be forced to document, in prose, every change to my production system – that is the role of automated tests, which do a better job, because they are a more accurate description of the behavior of the code (they must be because they passed) and they are necessary for other reasons, beyond regulatory compliance, and so I am going to create them anyway.

Conclusion

I have worked in regulated industries before and after I learned how do practice Continuous Delivery. All of my non-CD experience, including what I have, over several decades in consultancy roles, observed in client organizations, leads me to the belief that in the absence of CD, regulatory compliance is practiced “more by the breach than the observance”. That is to say, most regulated organizations usually have a long list of “compliance-breaches” that they, one day, hope to catch-up on.

The usual organizational responses that I have observed are to either, try to slow the pace of change to gain control (this is counter productive because slow, heavy-weight process are negatively correlated with quality) or they try and skate-close-to-the-edge and keep working and do the bare-minimum to keep regulators happy. Neither of these is a desirable, or a high-quality outcome!

I have seen the CD approach remove compliance as an obstacle!

I have seen organizations move from taking weeks, sometimes months, to ensure that releases into production were “compliant” with regulation (and never making it), to being able to generate genuinely compliant release candidates multiple times per day, along with all of the documentation and approvals.

In fact, when working at LMAX on creating one of the highest performance financial exchanges in the world, it was more difficult for us to release a change that wasn’t compliant, than one that was. Our Deployment Pipeline enforced compliance, and so the only way we could avoid that was to break, or subvert the Pipeline.

So when I say “I believe that CD is an essential component of ANY regulated approach. That is, I believe that it is not possible to implement a genuinely compliant, regulated system in the absence of CD!” I really do mean it.

More Info

Continuous Delivery (Book):

https://www.amazon.co.uk/Continuous-Delivery-Deployment-Automation-Addison-Wesley/dp/0321601912/

Rationale for CD (Talk):

https://www.youtube.com/watch?v=U_DuDgkoSRA

Optimizing Deployment Pipelines (Talk):

https://www.youtube.com/watch?v=gDgAVqkFYWs

Accelerate – The Science of Lean Software and DevOps (Book): https://amzn.to/2P2aHjv

Adopting CD at Siemens Healthcare (Article): https://www.infoq.com/articles/continuous-delivery-teamplay/

Pingback: New top story on Hacker News: Continuous Compliance – Hckr News

Pingback: New top story on Hacker News: Continuous Compliance – Latest news

Pingback: Hexbyte News Computers Continuous Compliance - HexByte Inc.

Excellent article. My business partner and I work in the Clinical Trials industry and from day 1 we set out to use Continuous Integration (and Delivery) to meet the regulatory requirements. We use GitLab to capture requirements, evidence of code reviews (of merge requests), manage pipelines and many other aspects of the process. Auditors have a simple test “Show me evidence that you do X or I must assume that X did not happen”. Paper-based processes make recording evidence an extra step to the process itself and will always lack the fidelity of a recording of what actually happened. In contrast, when your process is performed within a structured tool that captures audits of all your activities this evidence gathers itself.

Yes, the “evidence gathers itself” is what I mean when I talk about the audit working as a side-effect of the Pipeline.

You end up in the position where you can answer pretty much any question about and production change because you have the story in the data.

Great post!

Pingback: Four short links: 5 September 2019 – sportsguru

Excellent… I would one thing that is really worth mentioning… Risk Management.

You talked a bit about “many low risk changes” but the developers, along with stakeholder, ought to be able to assign a risk level of some kind to both small, and ‘significant’ changes… with well designed complete automated testing, those risks ought to be easily mitigated and documented as you outline.

Good, interesting article, thanks. Personally, what I love in the continuous delivery idea is the importance of metrics. It’s totally normal and obvious that you track the time of different tasks, that you build your plans for a given project, basing on the metrics you’ve already obtained from similar project. I use metrics like CFD (https://kanbantool.com/kanban-guide/cumulative-flow-diagram ) but I’ve seen many other ways and their work really well too. Do you maybe have any article about metrics in CD?

I have some stuff on my YouTube channel on that topic, but haven’t blogged on it yet.

There is a video on metrics here: Measuring Continuous Delivery

You can see other videos on the channel here: CD YouTube Channel

Pingback: The Impact of Continuous Delivery | Dave Farley’s Weblog

Great blog! I am loving it!! Will be back later to read some more. I am bookmarking your feeds also.

Hello i am kavin, its my first time to commenting anyplace, when i read this article i thought i couldalso make comment due to this sensible article.